eCommerce Insights

- Entity Relationship Diagram

- Table Description

- Data Sources

- Data Destinations

- How to Use Template

- Authorizing eCommerce Data Sources

- Authorizing Data Destinations

- Most Common Errors

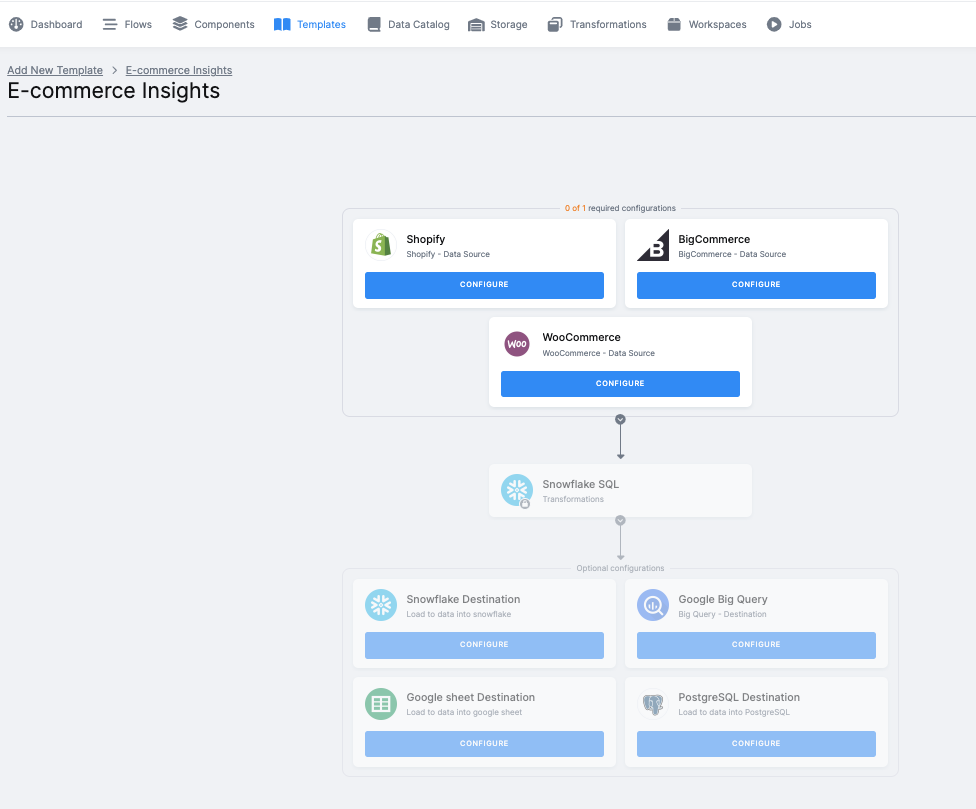

With this end-to-end flow you can extract your updated data from an eCommerce platform and bring it into Keboola. After all the necessary tasks are performed on the data, you can transform the results into visualizations in any BI tool of your choice.

The flow, in a nutshell:

-

First, the eCommerce data source connector will collect data from your account (data about orders, products, inventory, and customers).

-

We then create the output tables. We add NULL values if any columns are missing. We also check the data, and perform an RFM analysis.

-

The data is then written into your selected destination, for example to Snowflake database via the Snowflake data destination connector.

-

Finally, you will run the entire flow (i.e., the sequence of all the prepared, above-mentioned steps, in the correct order). The eCommerce data source connector, all data manipulations and analyses, and the data destination connector of your choice will be processed.

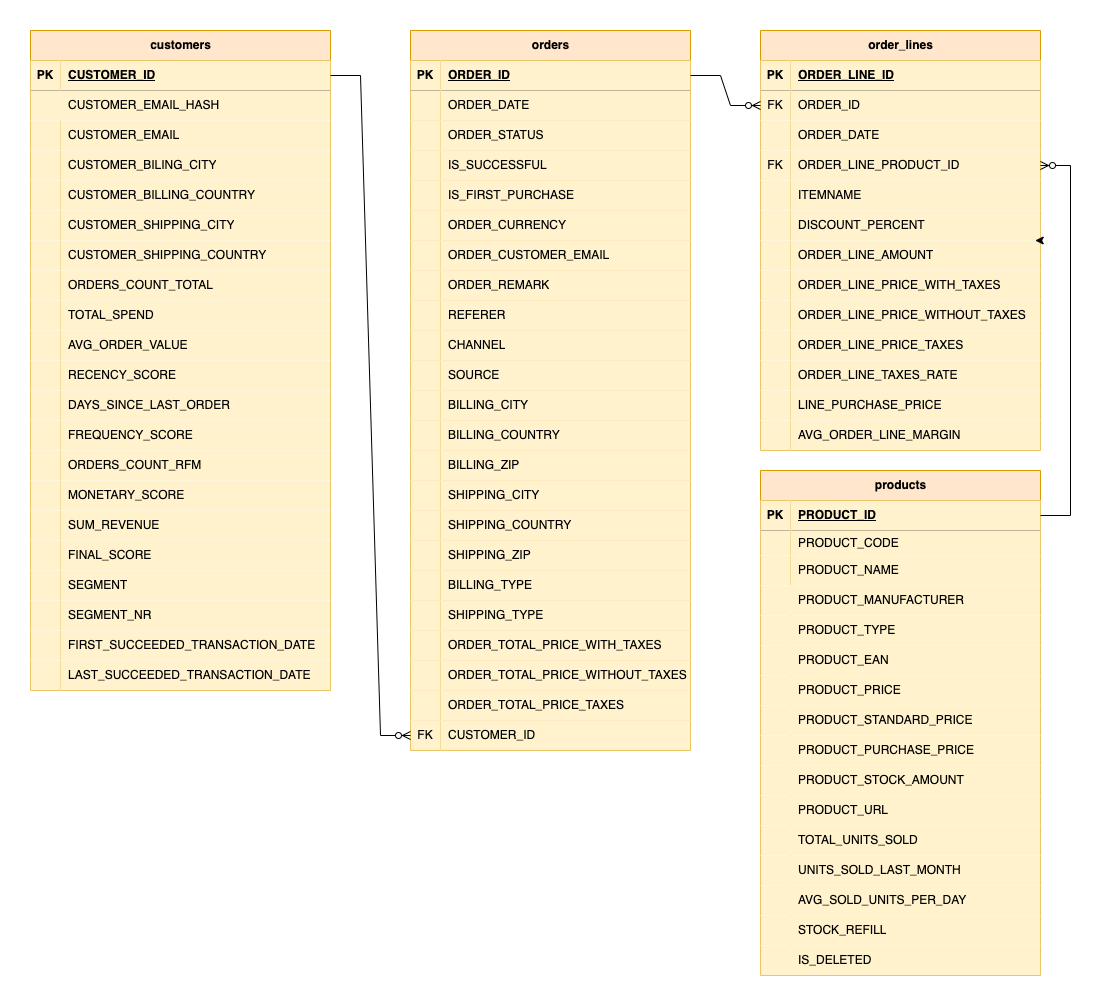

Entity Relationship Diagram

An entity-relationship diagram is a specialized graphic that illustrates the relationships between entities in a data destination.

Table Description

| Name | Description |

|---|---|

| ORDERS | contains list of customer orders including order date, purchase, price and taxes |

| ORDER LINES | contains individual items to orders, incl. order date, amount of bought items, item prices, and item average margin |

| CUSTOMERS | contains list of customers, incl. email, customer billing and shipping information, total orders count and total orders value, as well as the actual RFM segment and score of each customer |

| PRODUCTS | contains list of products including product type, product manufacturer, product price, stock amount, number of units sold in the last 30 days, and information about stock refill |

Data Sources

These are the data sources that are available in Public Beta:

Data Destinations

These data destinations are available in Public Beta:

- Snowflake database provided by Keboola

- Snowflake database

- Google BigQuery database

- Google Sheets

- PostgreSQL

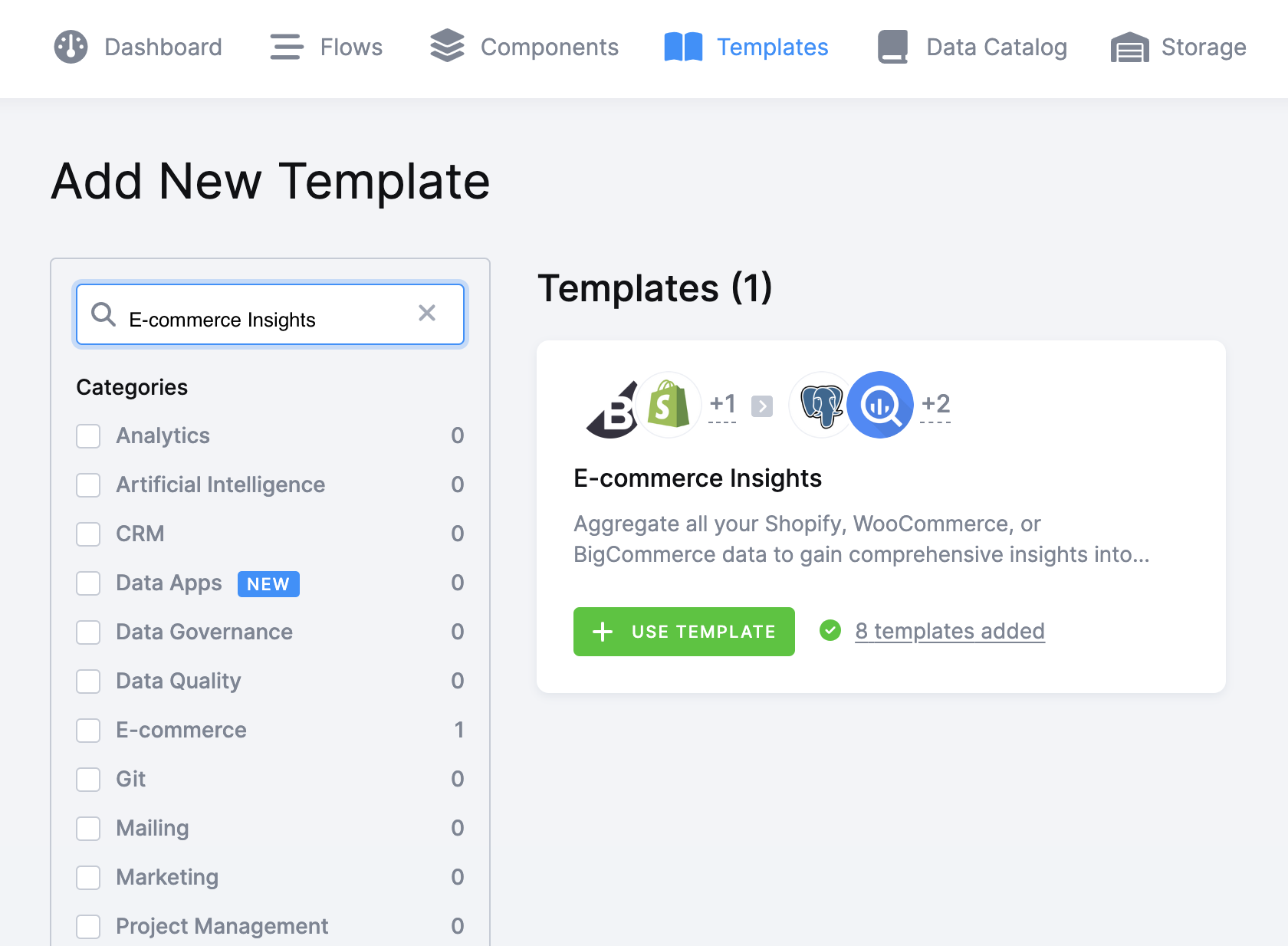

How to Use Template

The process is simple. We will guide you through it, and, when needed, ask you to provide your credentials and authorize the data destination connector.

Select the template from the Templates tab in your Keboola project. When you are done, click + Use Template.

This page contains information about the template. Click + Set Up Template.

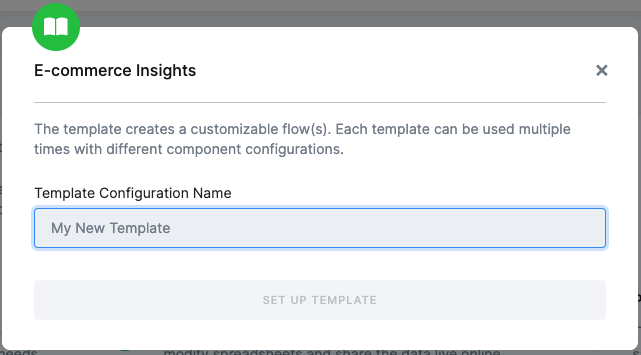

Now enter a name for the template instance that you are about to create. This allows you to use the template as many times as you want. It is important to keep things organized.

After clicking Set Up Template, you will see the template builder. Select exactly one of three eCommerce data sources. Fill in all needed credentials and perform the required OAuth authorizations.

Important: Make sure to follow all the steps very carefully to prevent the newly created flow from failing because of any user authorization problems. If you are struggling with this part, go to the section Authorizing Data Destinations below.

Follow the steps one by one and authorize your data sources. An eCommerce data source is required. In this case, Shopify is selected.

Finally, the destination must be authorized as well.

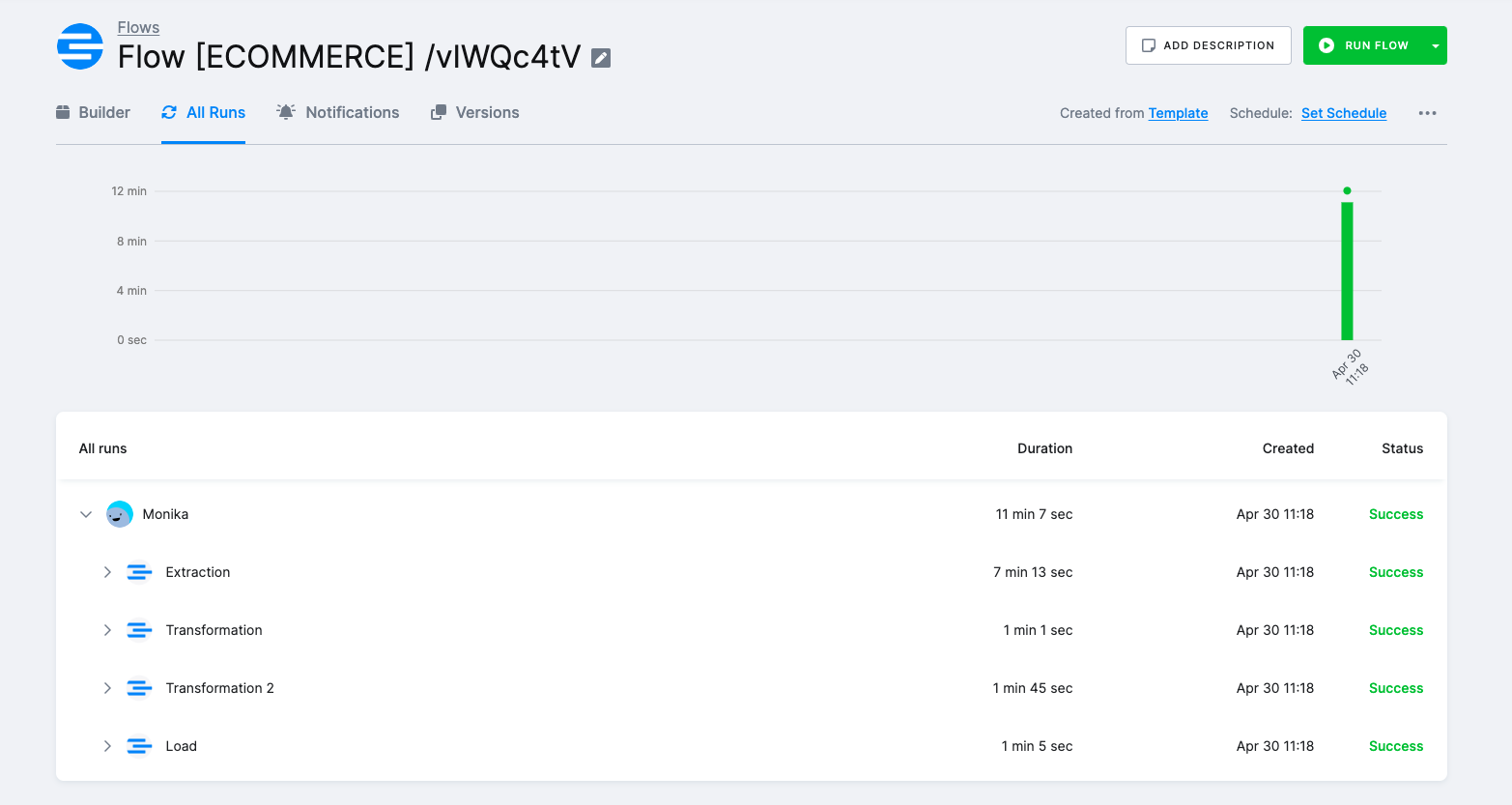

When you are finished, click Save in the top right corner. The template builder will create your new configuration and when it is done, you will be redirected to the Template Catalogue where you can see the newly created flow.

Click Run Template and start building your visualizations a few minutes later.

Authorizing eCommerce Data Sources

To use a selected data source connector, you must first authorize the data source.

At least one data source must be used in order to create a working flow.

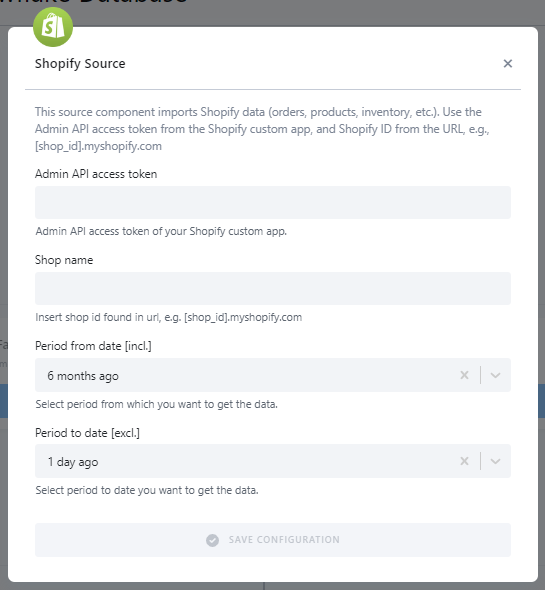

Shopify Analytics

To enable this application, you must:

- Enable private app development for your store.

- Create a private application.

- Enable

Read accessADMIN API PERMISSIONS for the following objects:OrdersProductsInventoryCustomers

Additional documentation is available here.

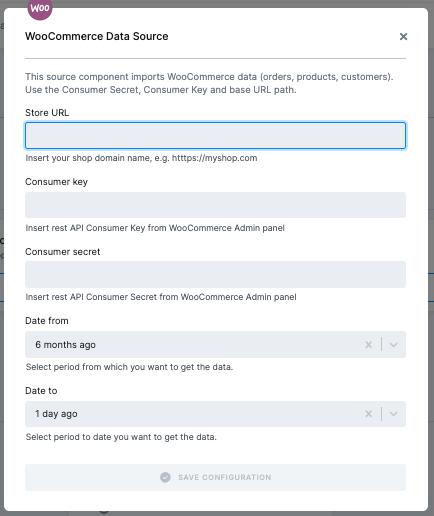

WooCommerce Analytics

To download data from WooCommerce we need to configure:

-

Store_url: Website Domain name where WooCommerce is hosted. e.g. https://myshop.com

-

consumer_key: Rest API Consumer Key from WooCommerce Admin panel

-

consumer_secret: Rest API Consumer Secret from WooCommerce Admin panel

-

date_from: Inclusive Date in YYYY-MM-DD format or a string, i.e. 5 days ago, 1 month ago, yesterday, etc.

-

date_to: Exclusive Date in YYYY-MM-DD format or a string, i.e. 5 days ago, 1 month ago, yesterday, etc.

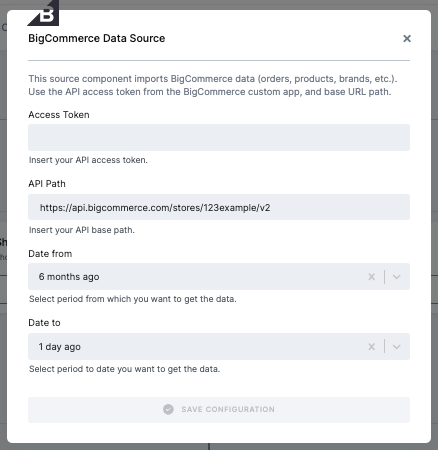

BigCommerce Analytics

To authorize the BigCommerce data source, enter the following information:

-

Access Token - V2/V3 API access token with read-only OAuth scope

-

API Path

Both can be accomplished by following this guide.

Additional documentation is available here.

Authorizing Data Destinations

When creating a working flow, you can select one or more data destinations.

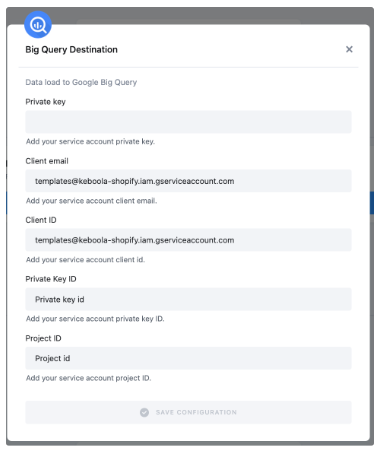

BigQuery Database

To configure the data destination connector, you need to set up a Google Service Account and create a new JSON key.

A detailed guide is available here.

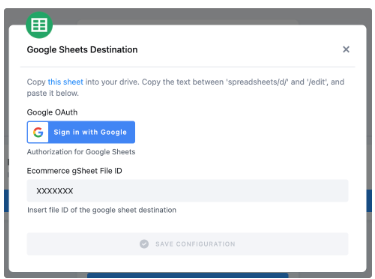

Google Sheets

Authorize your Google account.

Duplicate the sheet into your Google Drive and paste the file ID back into Keboola. This is needed to achieve correct mapping in your duplicated Google sheet.

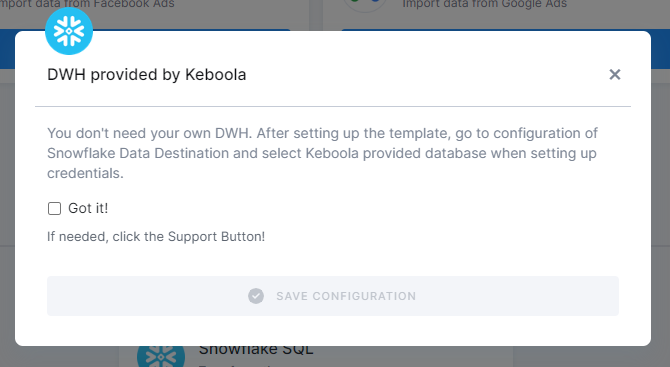

Snowflake Database Provided by Keboola

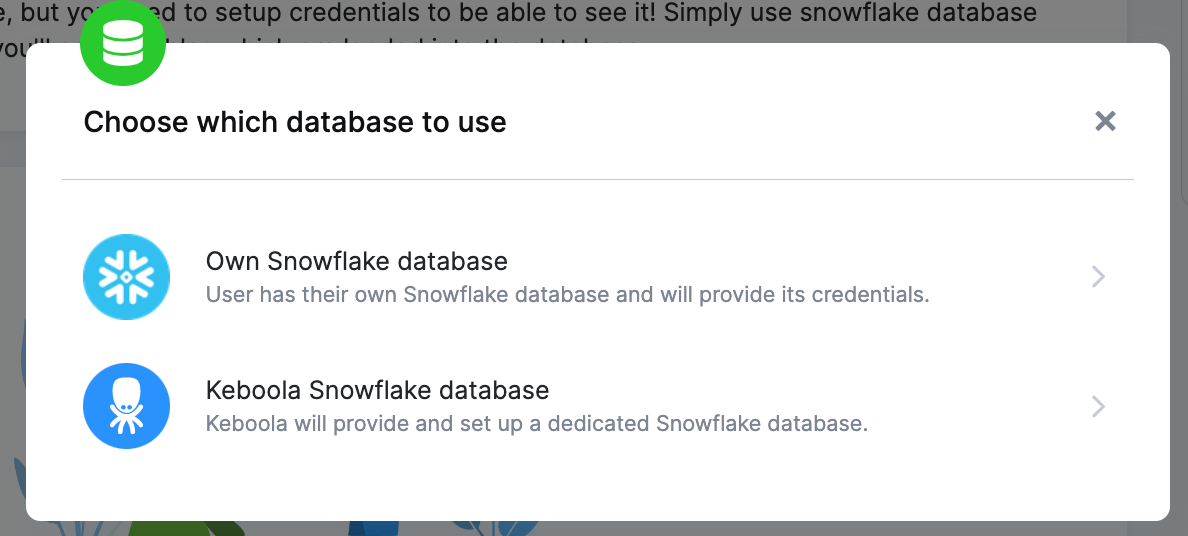

If you do not have your own data warehouse, follow the instructions and we will create a database for you:

- Configure the Snowflake destination and click Save Configuration.

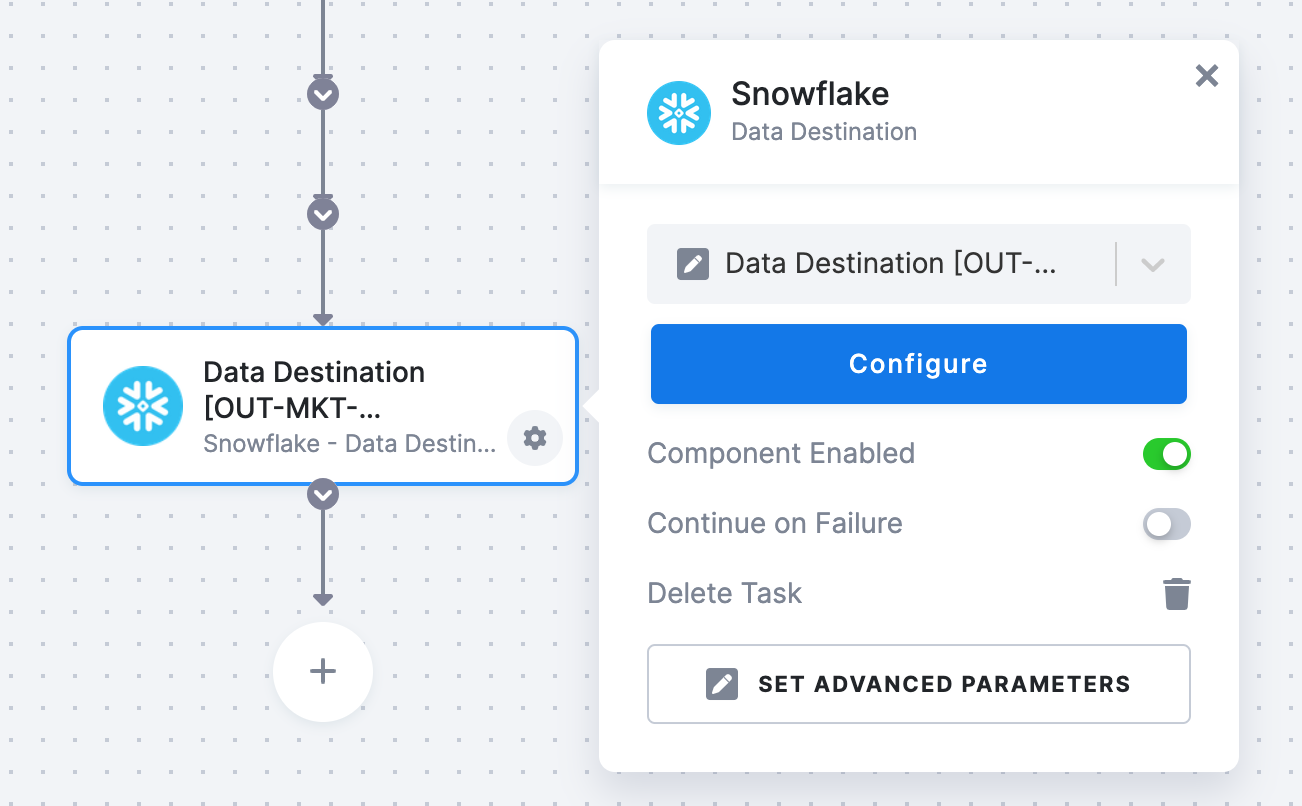

- After clicking Save, the template will be used in your project. You will see a flow.

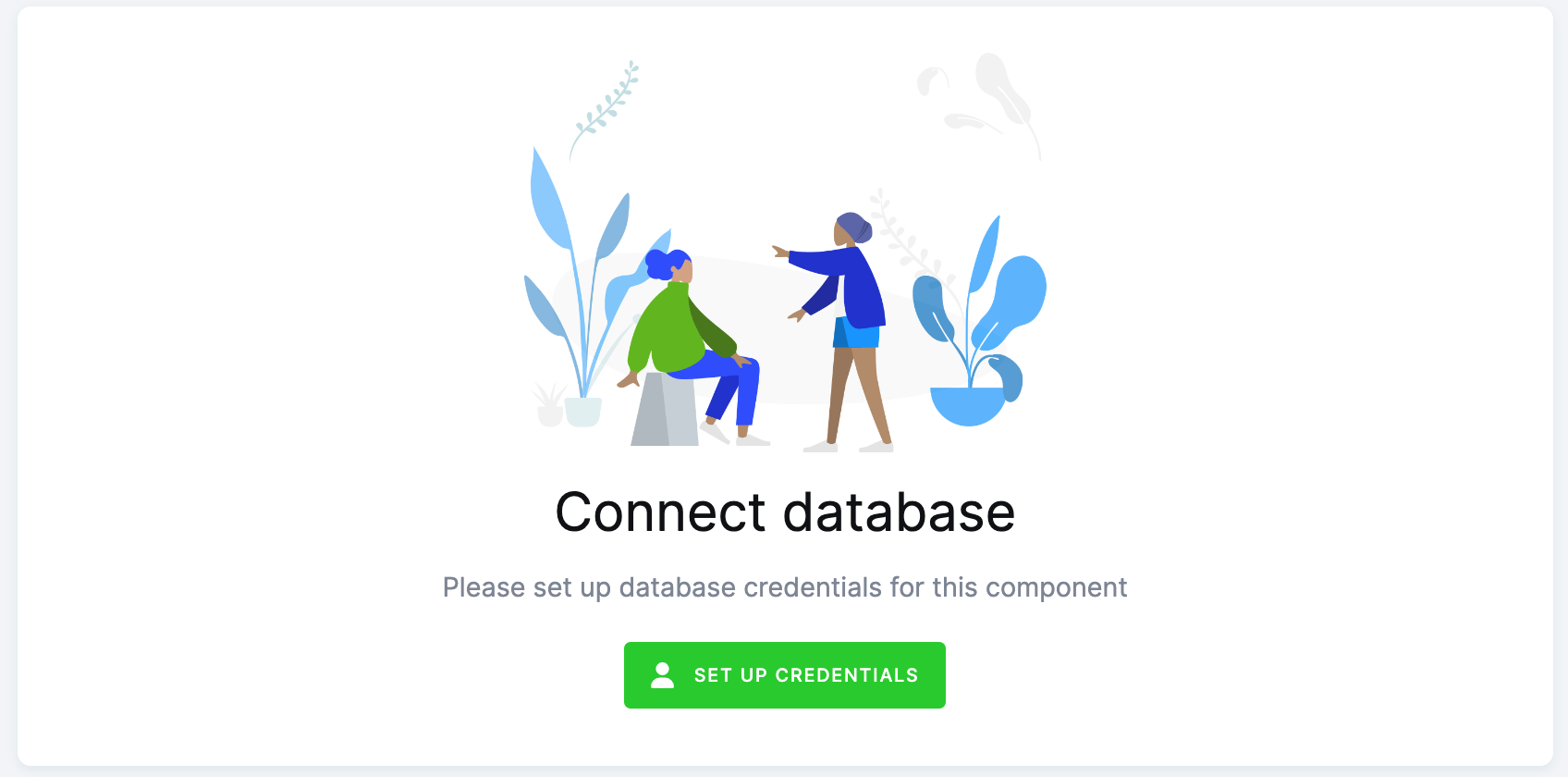

- Go there and click on Snowflake Data Destination to configure it. You will be redirected to the data destination configuration and asked to set up credentials.

- Select Keboola Snowflake database.

- Then go back to the flow and click Run.

Everything is set up.

Snowflake Database

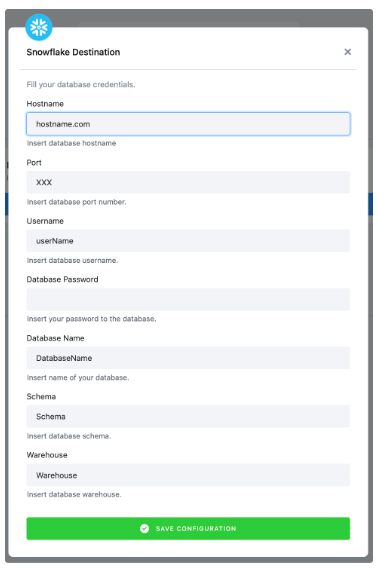

If you want to use your own Snowflake database, you must provide the host name (account name), user name, password, database name, schema, and a warehouse.

We highly recommend that you create a dedicated user for the data destination connector in your Snowflake database. Then you must provide the user with access to the Snowflake Warehouse.

Warning: Keep in mind that Snowflake is case sensitive and if identifiers are not quoted, they are converted to upper case. So if you run, for example, a query CREATE SCHEMA john.doe;, you must enter the schema name as DOE in the data destination connector configuration.

More info here.

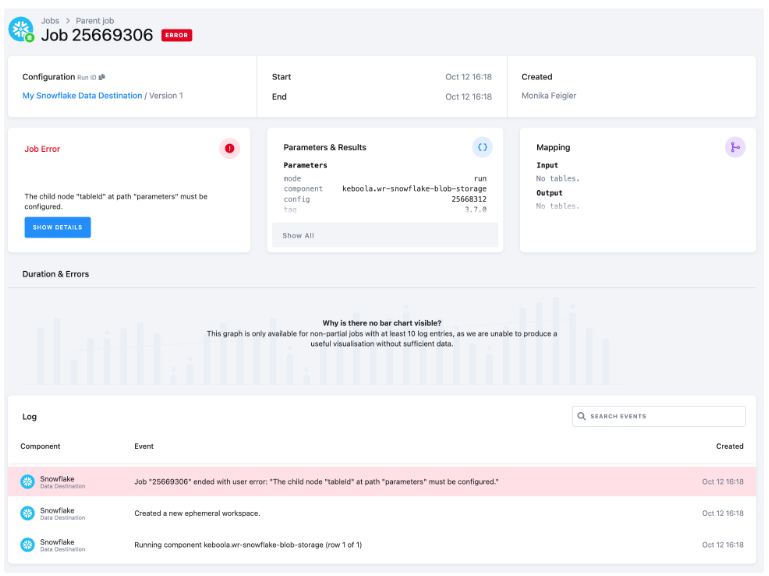

Most Common Errors

Before turning to the Keboola support team for help, make sure your error is not a common problem that can be solved without our help.

Missing Credentials to Snowflake Database

If you see the error pictured below, you have probably forgotten to set up the Snowflake database.

Click on the highlighted text under Configuration in the top left corner. This will redirect you to the Snowflake Database connector. Now follow the Snowflake Database provided by Keboola on the page Authorizations/destinations.

Then go to the Jobs tab and Run the flow again.