- Home

- Keboola Overview

- Getting Started Tutorial

- Kai - AI Assistant

-

Conditional Flows

-

Legacy Flows

-

Templates

- Advertising Platforms

- AI SMS Campaign

- Customer Relationship Management

- DataHub

- Data Quality

- eCommerce

- eCommerce KPI Dashboard

- Google Analytics 4

- Interactive Keboola Sheets

- Mailchimp

- Media Cashflow

- Project Management

- Repository

- Snowflake Security Checkup

- Social Media Engagement

- Surveys

- UA and GA4 Comparison

-

Templates

- Migration Guide

-

Legacy Flows

- Apps

-

Components

- Running Jobs in Parallel

-

Data Source Connectors

- Communication

- Databases

- ERP

-

Marketing/Sales

- Adform DSP Reports

- Babelforce

- BigCommerce

- ChartMogul

- Criteo

- Customer IO

- Facebook Ads

- GoodData Reports

- Google Ads

- Google Ad Manager

- Google Analytics (UA, GA4)

- Google Campaign Manager 360

- Google Display & Video 360

- Google My Business

- Linkedin Pages

- Mailchimp

- Market Vision

- Microsoft Advertising (Bing Ads)

- Pinterest Ads

- Pipedrive

- Salesforce

- Shoptet

- Sklik

- TikTok Ads

- Zoho

- Social

- Storage

-

Other

- Airtable

- AWS Cost Usage Reports

- Azure Cost Management

- Ceps

- Dark Sky (Weather)

- DynamoDB Streams

- ECB Currency Rates

- Generic Extractor

- Geocoding Augmentation

- GitHub

- Google Search Console

- Okta

- HiBob

- Mapbox

- Papertrail

- Pingdom

- ServiceNow

- Stripe

- Telemetry Data

- Time Doctor 2

- Weather API

- What3words Augmentation

- YourPass

- Data Destination Connectors

- Applications

- Development Branches

- IP Addresses

- Data Catalog

- Storage

- Transformations

- Workspaces

- Management

- AI Features

- External Integrations

- Home

- Components

- Data Source Connectors

- Databases

- Google BigQuery

Google BigQuery

The BigQuery data source connector loads data from BigQuery and brings it into Keboola. Running the connector creates a background job that

- executes the queries in Google BigQuery.

- saves the results to Google Cloud Storage.

- exports the results from Google Cloud Storage and stores them in specified tables in Keboola Storage.

- removes the results from Google Cloud Storage.

Note: Using the Google BigQuery connector is also described in our Getting Started Tutorial.

Initial Setup

Service Account

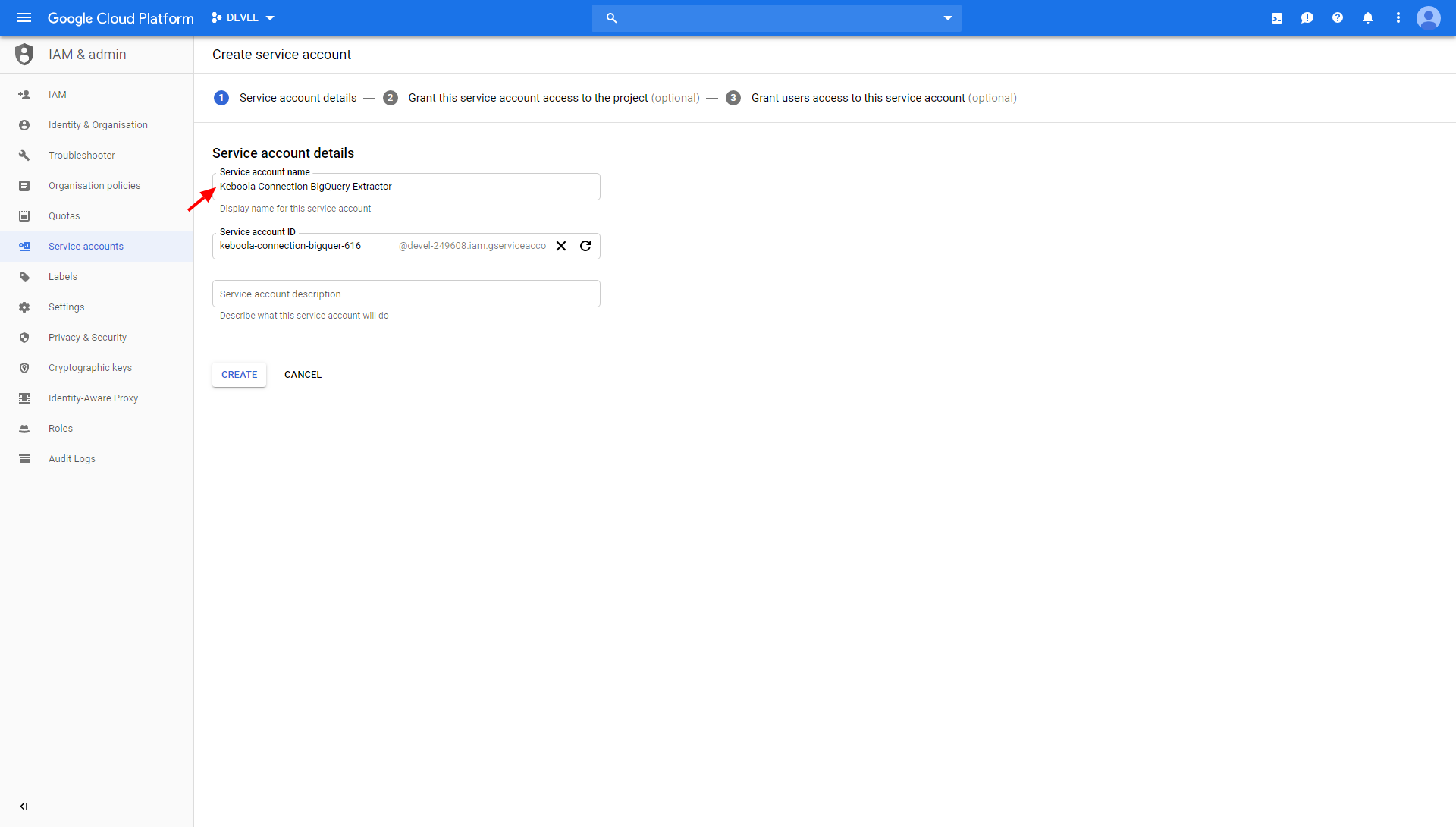

To access and extract data from your BigQuery dataset, you need to set up a Google service account. Go

to Google Cloud Platform Console > IAM & admin > Service accounts

and select the project you want the data source connector to have access to. Click Create Service Account

and enter a Service account name (e.g., Keboola BigQuery connector).

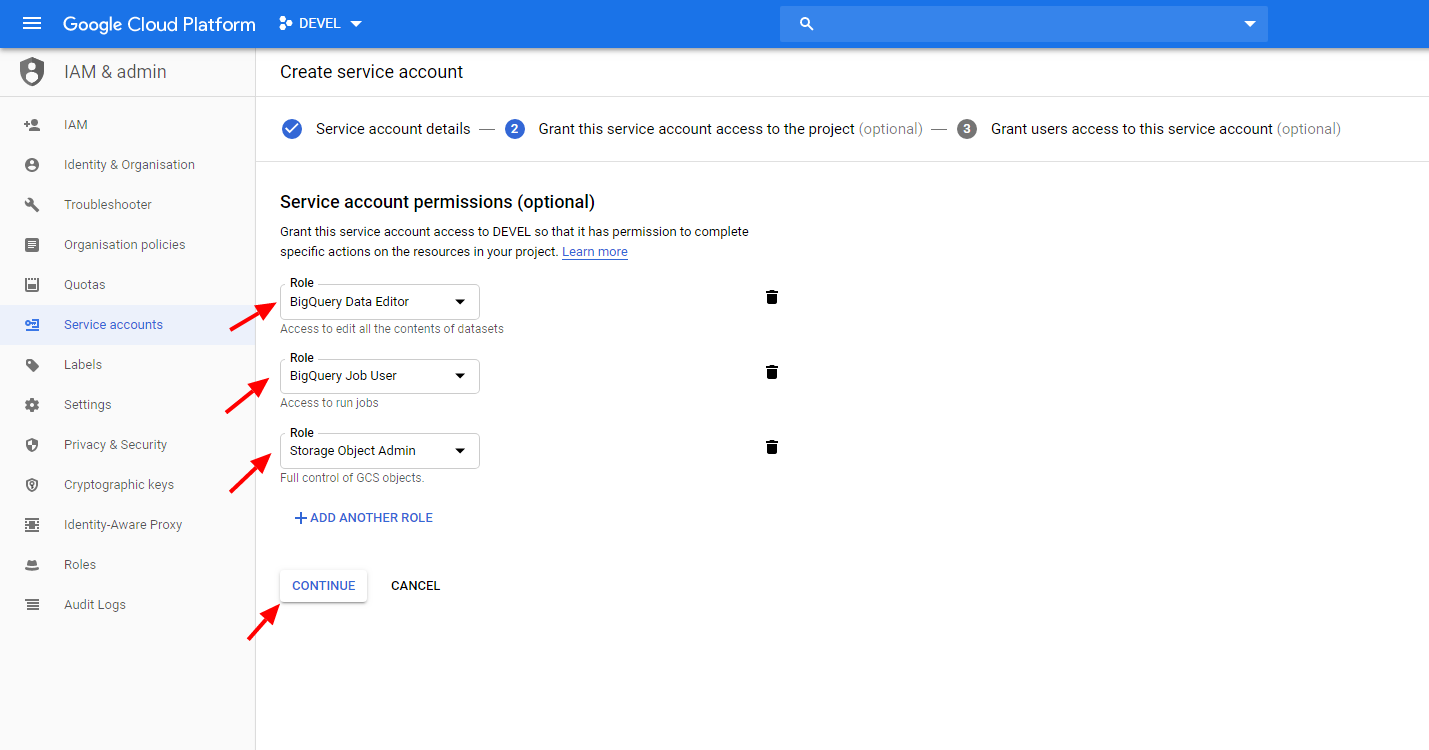

Then add the roles BigQuery Data Editor, BigQuery Job User and Storage Object Admin.

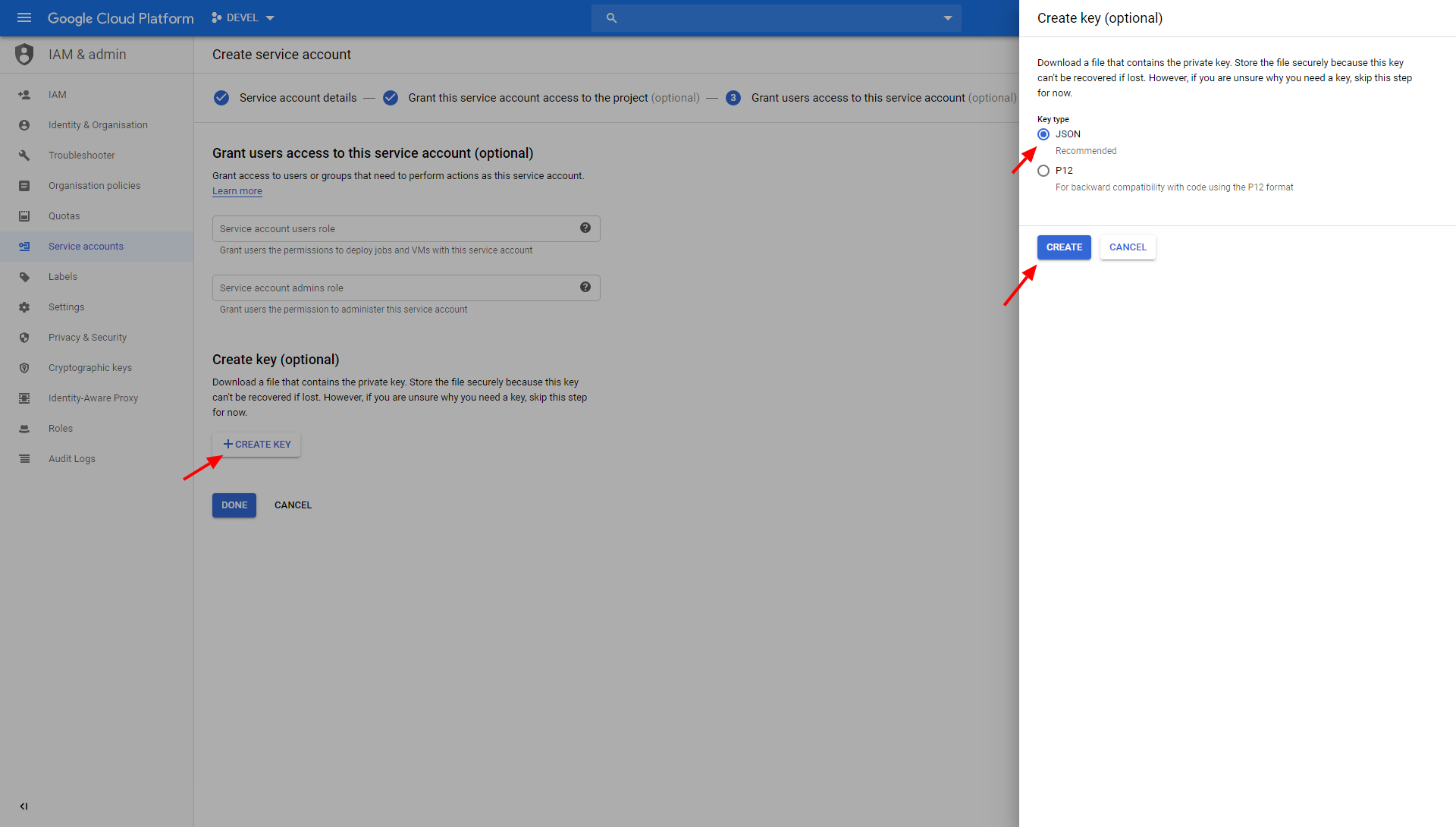

Finally, click + Create Key to create a new JSON key, and then click Create to download it to your computer.

Bucket

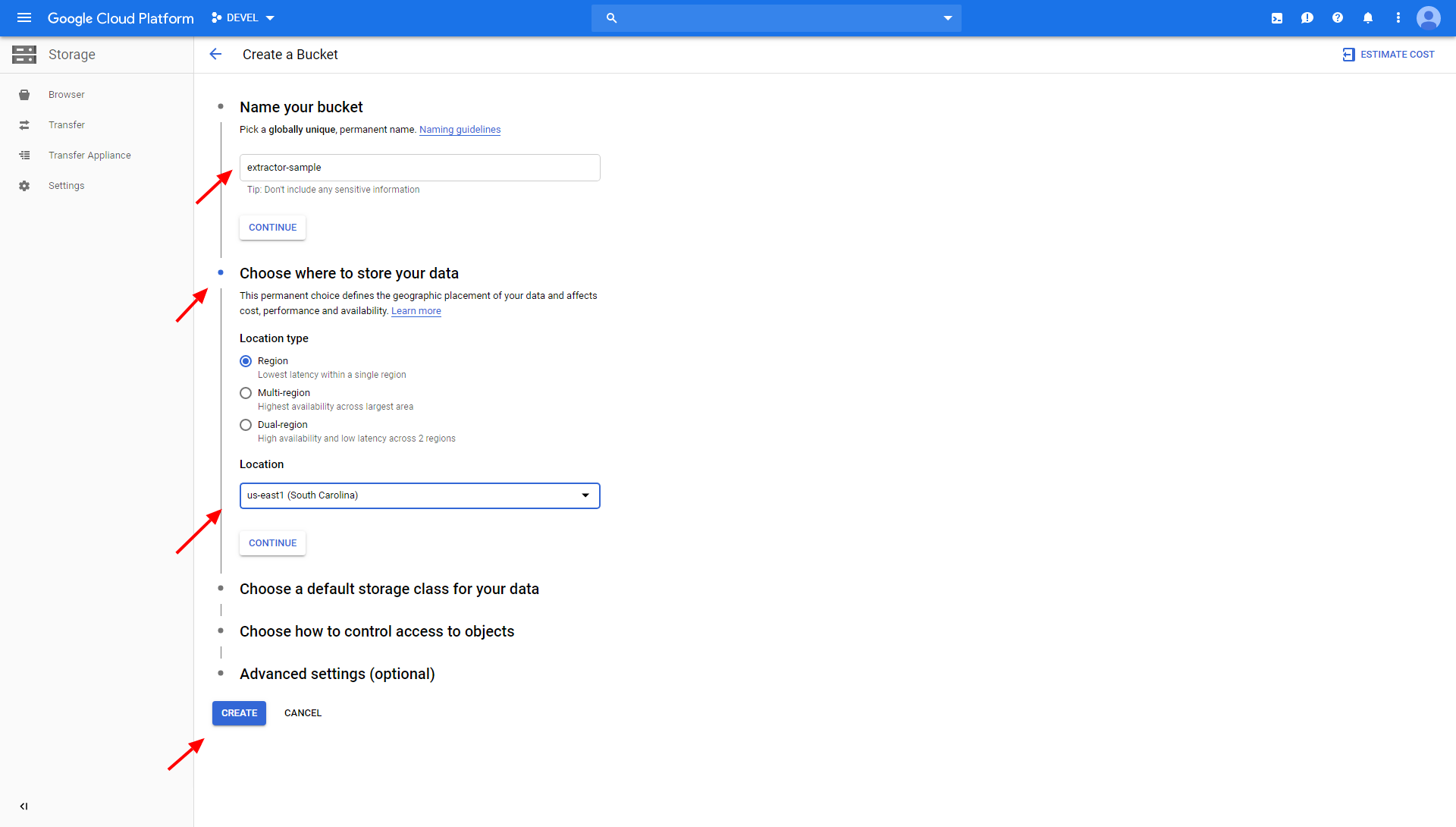

The data source connector uses a Google Storage bucket as a temporary storage for off-loading the data from BigQuery. Go to the Google Cloud Platform Console > Storage > Cloud Storage > Browser and click Create Bucket. Name the bucket and select its location (must be the same as of your dataset).

Do not set a retention policy on the bucket. The bucket contains only temporary data and no retention is needed.

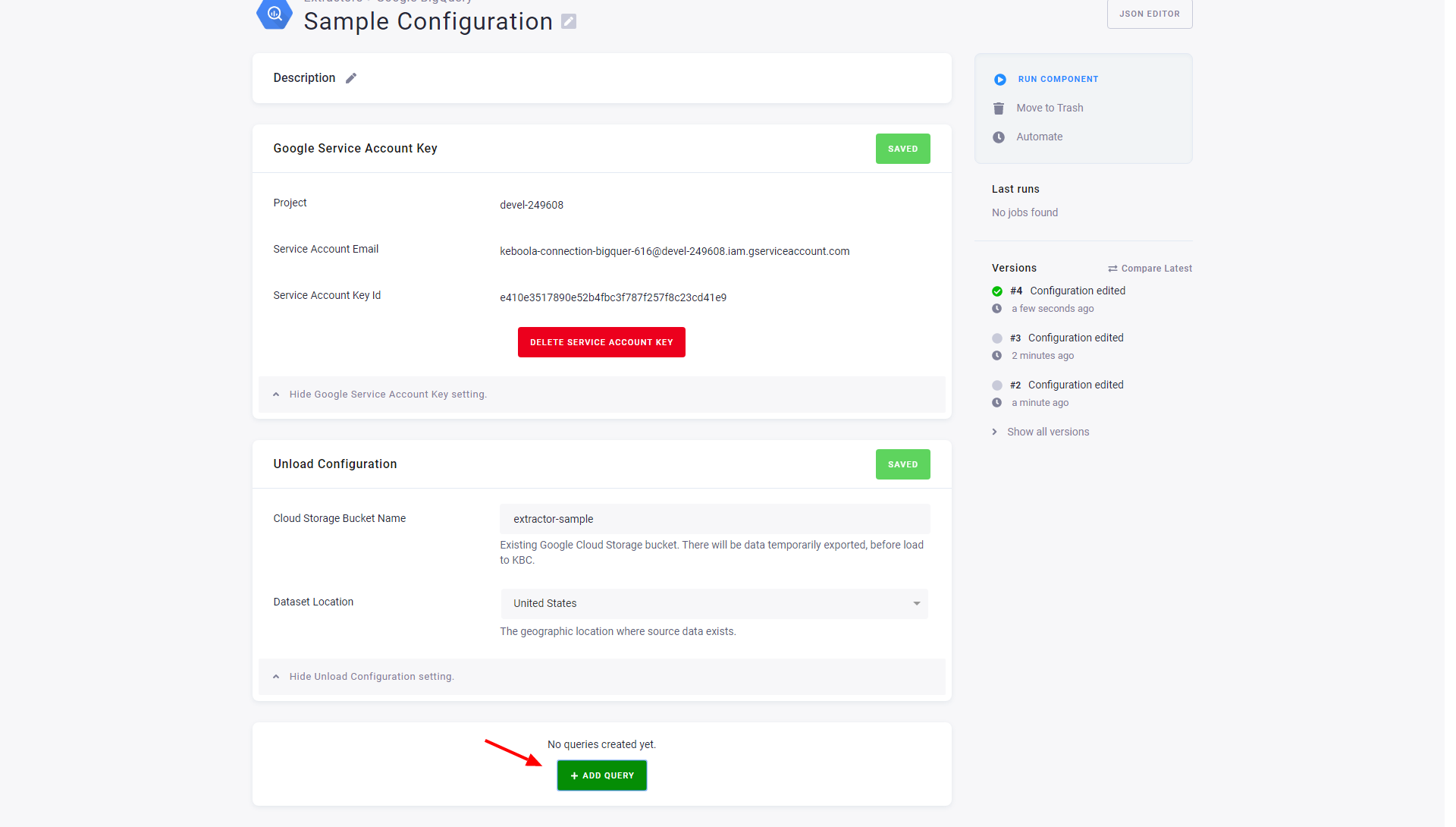

Configure Extraction

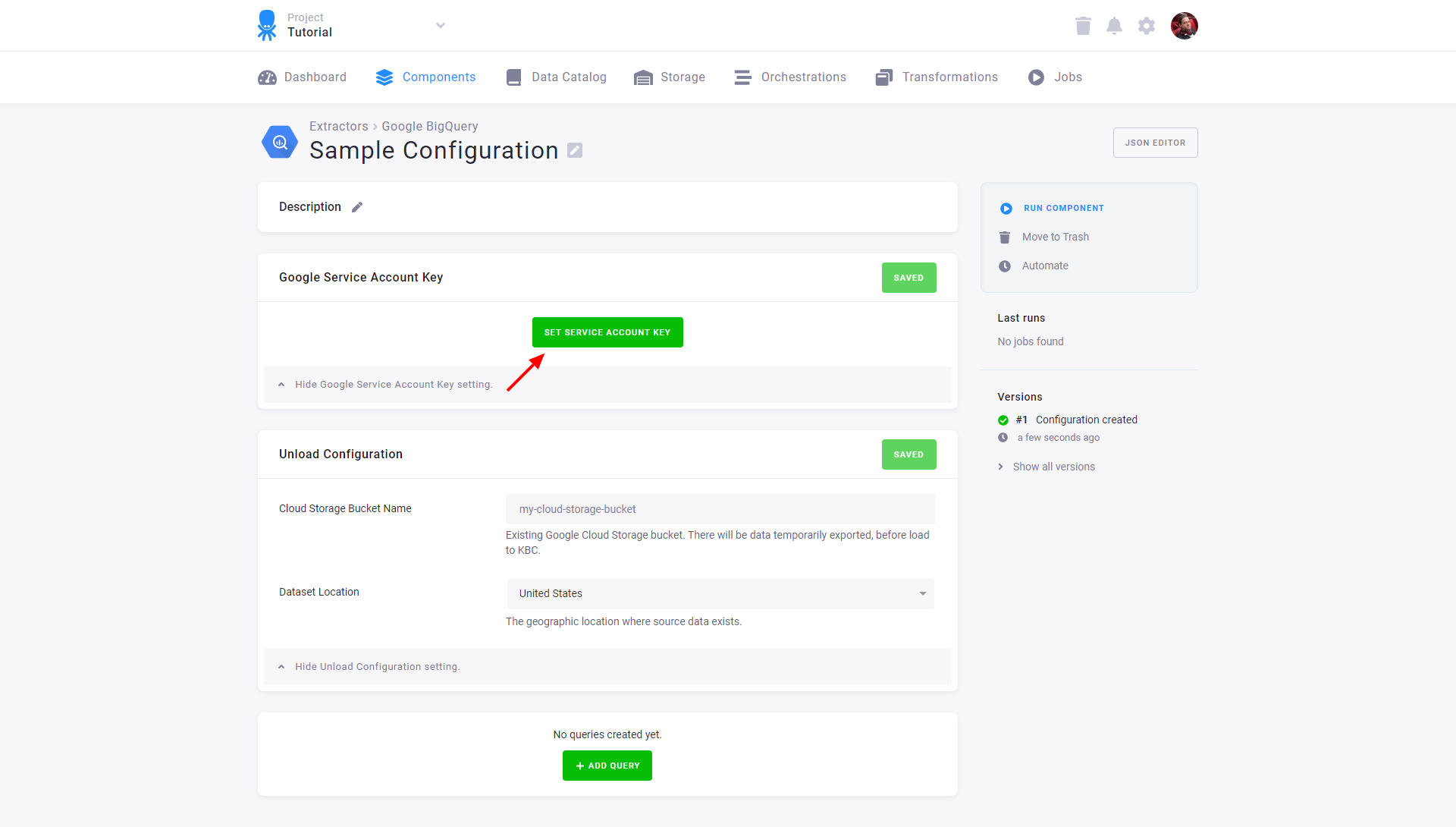

Create a new configuration of the BigQuery connector. Click Set Service Account Key.

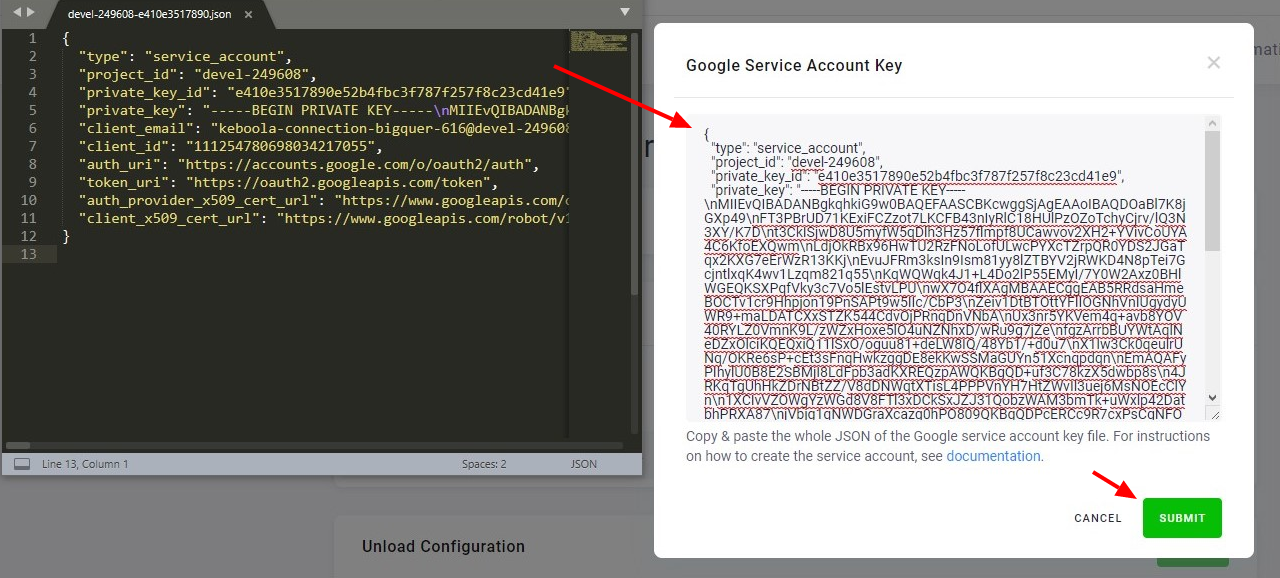

Open the downloaded key in a text editor, copy & paste it in the input field, and click Submit.

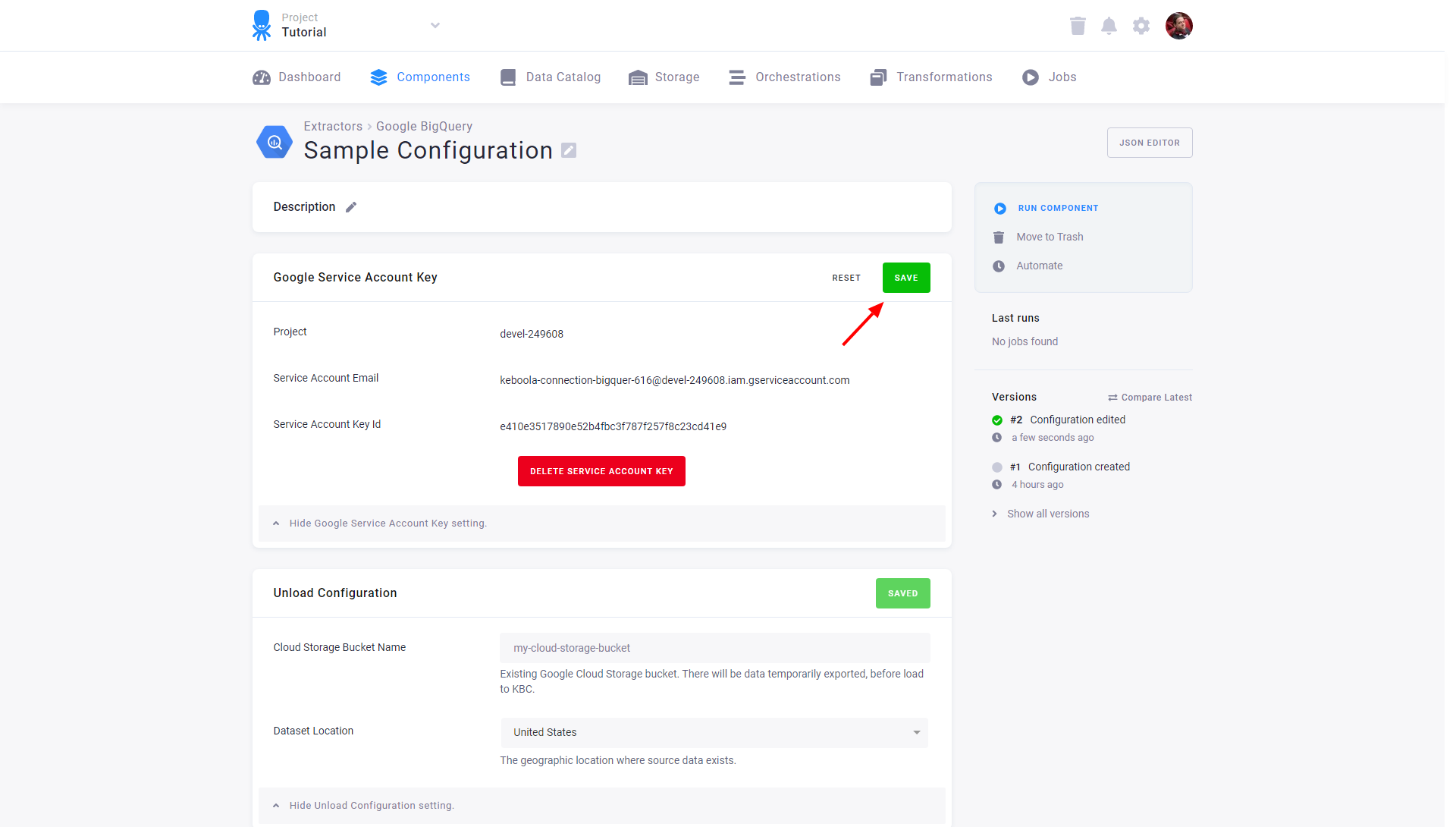

Click Save to store the credentials.

Important: The private key is stored in an encrypted form and only the non-sensitive parts are visible in the UI for your verification. The key can be deleted or replaced by a new one at any time.

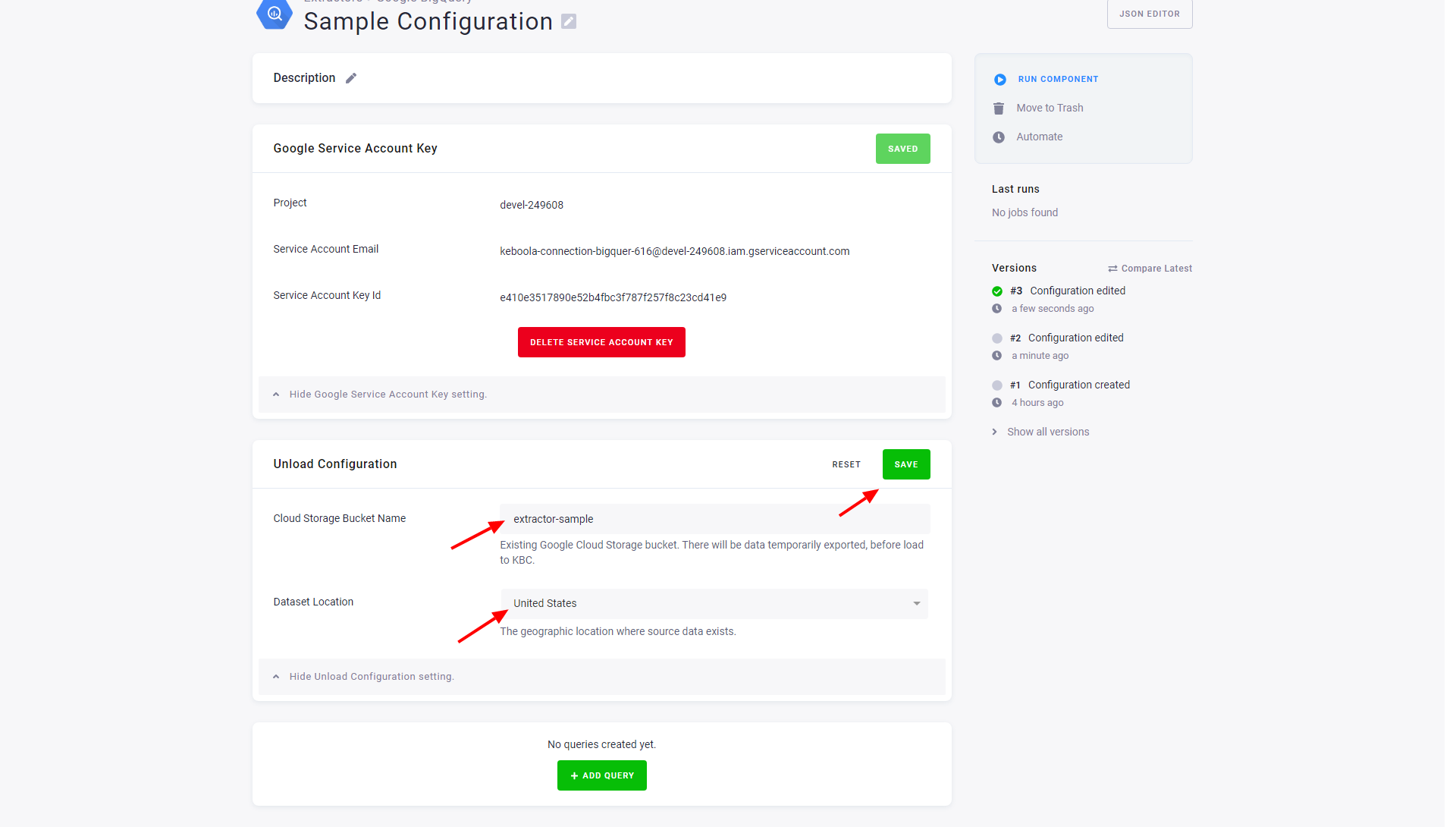

In the section Unload Configuration, enter Cloud Storage Bucket Name as the name of the bucket

you have created earlier, and select the correct Dataset Location. Click Save.

Configure Queries

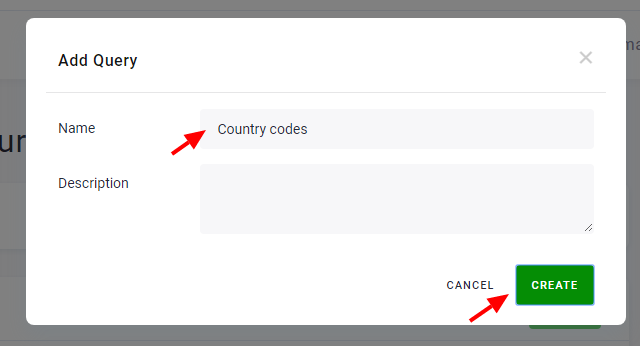

Start by clicking the button Add Query.

Name the query and click Create.

To learn how to modify your configuration, go to the SQL databases section.

© 2026 Keboola